3. Model Optimization

Model optimization helps improve the performance and efficiency of your model by tuning hyperparameters. Comet provides:

Hyperparameter Tuning

- A hyperparameter is a parameter whose value controls the learning process. Hyperparameter tuning means finding a set of optimal parameters for your model.

import comet_ml

from sklearn.model_selection import GridSearchCV

from sklearn import tree

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

X, y = load_iris(return_X_y=True)

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=42)

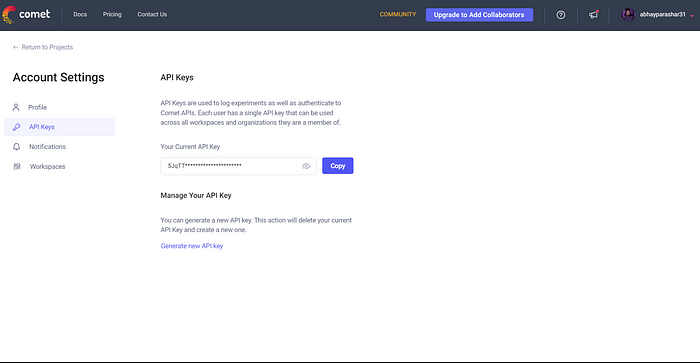

experiment = comet_ml.Experiment(project_name="hyperparameter-optimization", workspace="your-workspace")

param_grid = {

"C": [0.1, 1.0, 10.0],

"gamma": [0.001, 0.01, 0.1],

"kernel": ["linear", "rbf"]

}

experiment.log_parameters(param_grid)

model = tree.DecisionTreeClassifier()

optimizer = GridSearchCV(model, param_grid, cv=3, n_jobs=-1)

optimizer.fit(X_train, y_train)

best_params = optimizer.best_params_

best_score = optimizer.best_score_

experiment.log_parameters(best_params)

experiment.log_metric("accuracy", best_score)

Compare Model Architecture

- Comet makes it easy to keep a record and monitor various model architectures as you fine-tune them, enabling you to discover the most impactful design. By experimenting with different combinations of hyperparameters in neural networks, you can determine the optimal configuration that yields the greatest accuracy and performance. This exploration process becomes more efficient with Comet’s tracking capabilities.

import comet_ml

from tensorflow import keras

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score

import numpy as np

X, y = load_dataset()

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2)

experiment = comet_ml.Experiment(project_name="model-architecture-search", workspace="your-workspace")

model_architectures = [

{"layers": [64, 32], "activation": "relu"},

{"layers": [128, 64, 32], "activation": "relu"},

{"layers": [32, 16], "activation": "sigmoid"},

{"layers": [128, 64, 32, 16], "activation" : "relu"},

]

for architecture in model_architectures:

model = keras.Sequential()

for units in architecture["layers"]:

model.add(keras.layers.Dense(units, activation=architecture["activation"]))

model.compile(optimizer="adam", loss="categorical_crossentropy", metrics=["accuracy"])

model.fit(X_train, y_train, epochs=10, batch_size=32, validation_data=(X_test, y_test))

y_pred = np.argmax(model.predict(X_test), axis=1)

accuracy = accuracy_score(y_test, y_pred)

experiment.log_parameter("layers", architecture["layers"])

experiment.log_parameter("activation", architecture["activation"])

experiment.log_metric("accuracy", accuracy)