Experiment Management

ML Experiment Tracking

Get automatic experiment tracking for machine learning. Version datasets, debug and reproduce models, visualize performance across training runs, and collaborate with teammates.

Track, Compare, and Manage ML Models Using Your Current Workflow

Add two lines of code to automatically track, manage, and optimize models for faster iteration. Keep using the tools, libraries, and frameworks you use today for ML experiment tracking.

from comet_ml import Experiment

import keras

# Initialize the Comet logger

experiment = Experiment()

# Your code goes here…import ml.comet.experiment.Experiment;

import ml.comet.experiment.impl.OnlineExperiment;

public class Main {

public static void main(String[] args) {

// Initialize the Comet logger

Experiment experiment = new OnlineExperiment();

// Your code goes here... }

}library(reticulate)

# Import comet_ml Experiment from Python

comet_ml <- import("comet_ml")

experiment <- comet_ml$Experiment()

# Your code goes here…Runs on any Infrastructure

Deploy your way, using your requirements. We treat virtual private cloud (VPC) and on-premises environments as first-class citizens.

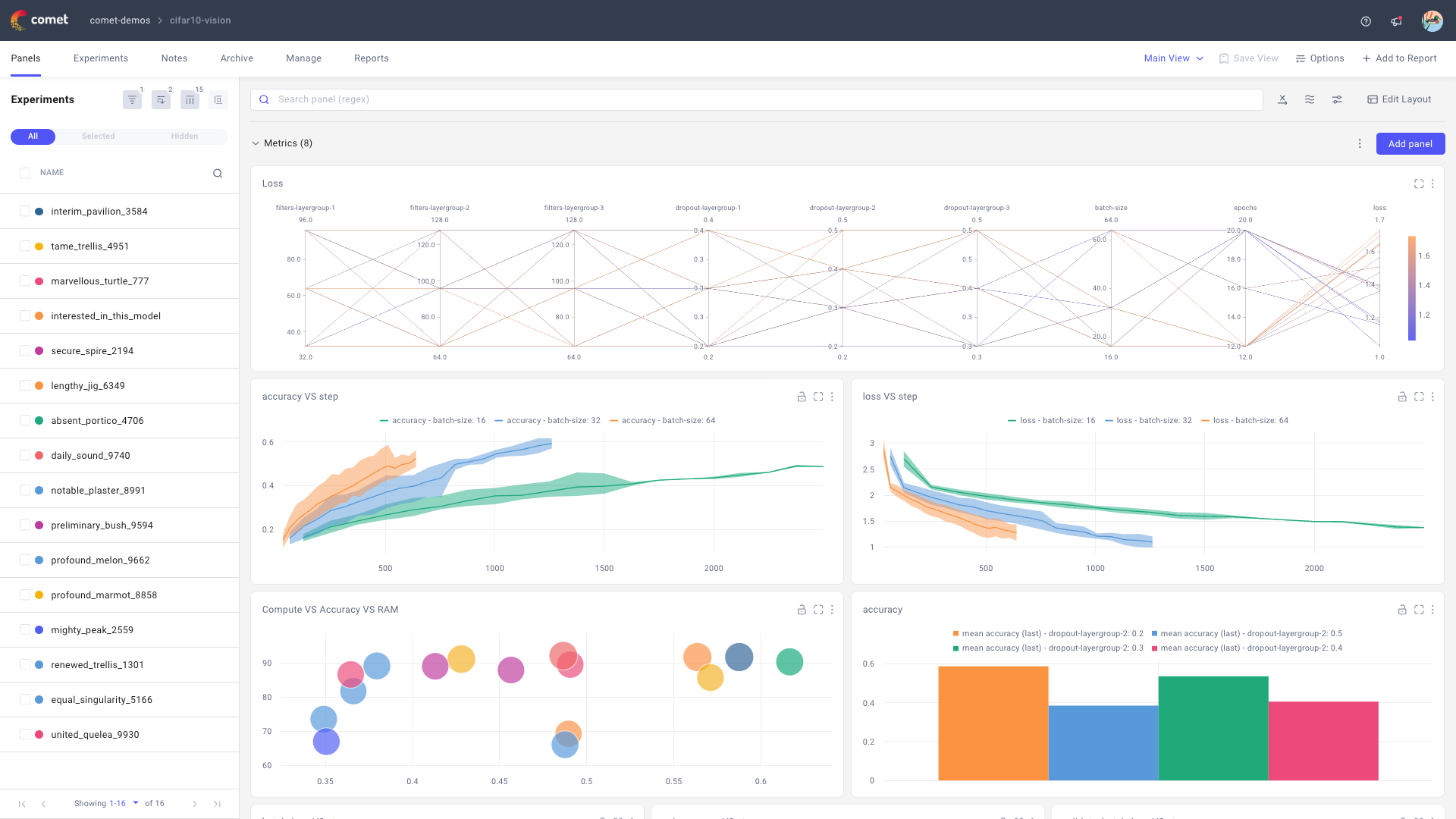

One Platform to Train, Optimize, and Version Your Models

Compare code, hyperparameters, metrics, predictions, dependencies, and system metrics to understand differences in model performance. Introduce a model registry for seamless handoffs to engineering.

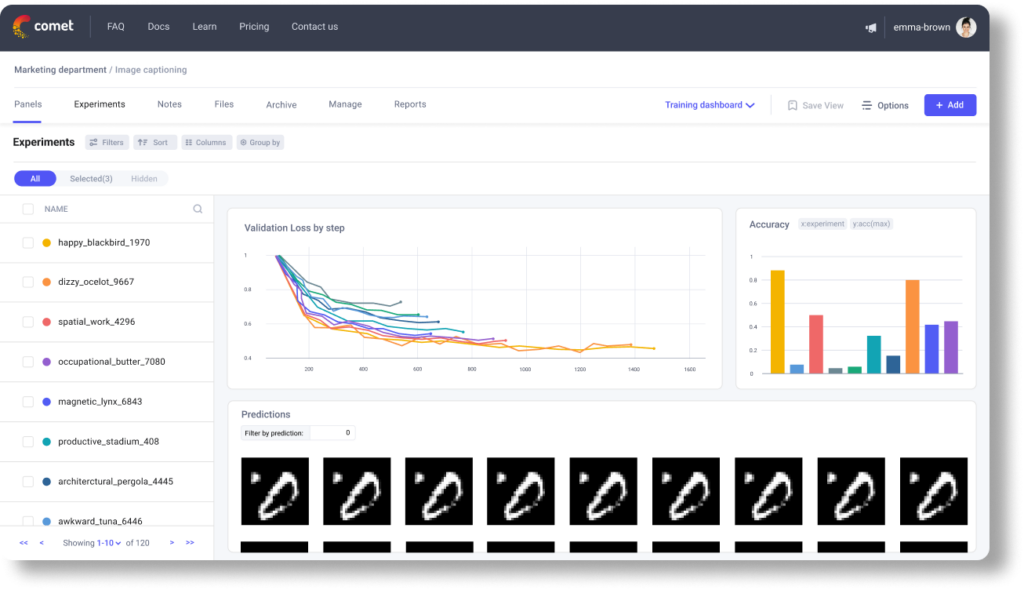

Experiment Tracking

Capture and visualize the data you need to train, analyze, and retrain models.

Model Management and Registry

Freeze, share, and reuse your highest-performing models with version control.

Best-In-Class Visualizations

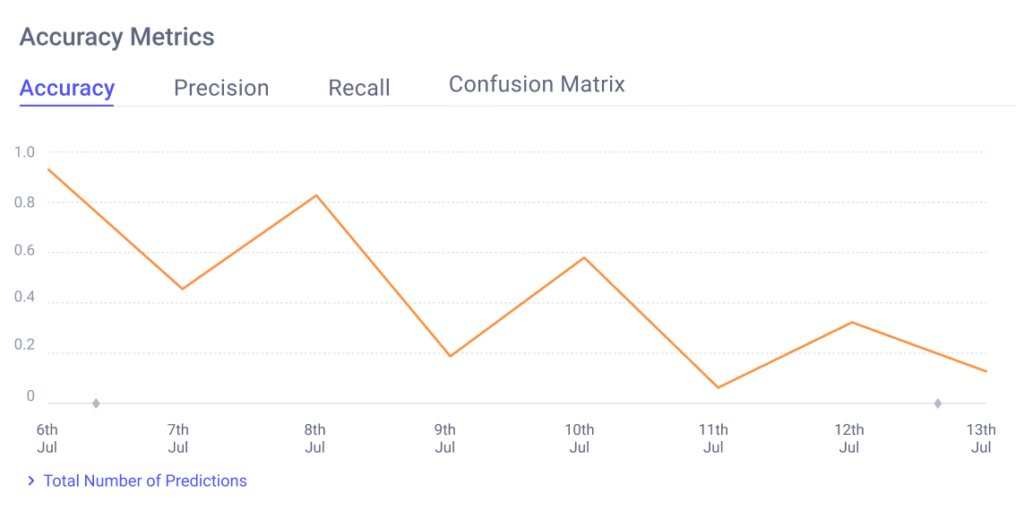

Built-in Charts

Easily track training metrics in real time, compare performance, debug, and evaluate models faster with built-in code panels.

Create Your Own

Easily implement your own dynamic visualizations using Matplotlib, Plotly, or your favorite library with Comet Code Panels.

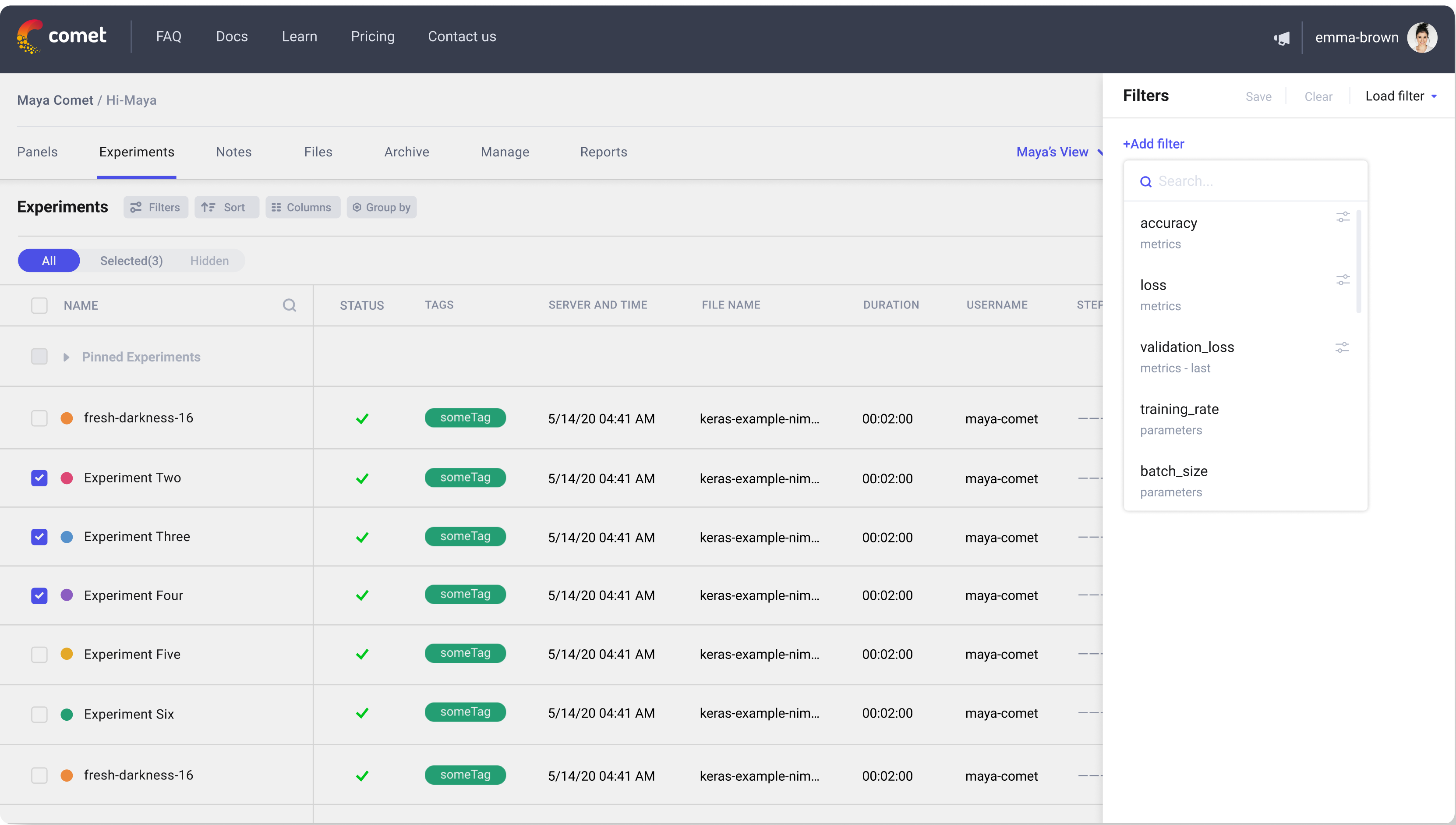

Train and Iterate Faster

Filters to Analyze Training Runs

Create filters for your experiments based on their attributes to support faster analysis and iteration during ML experiment tracking.

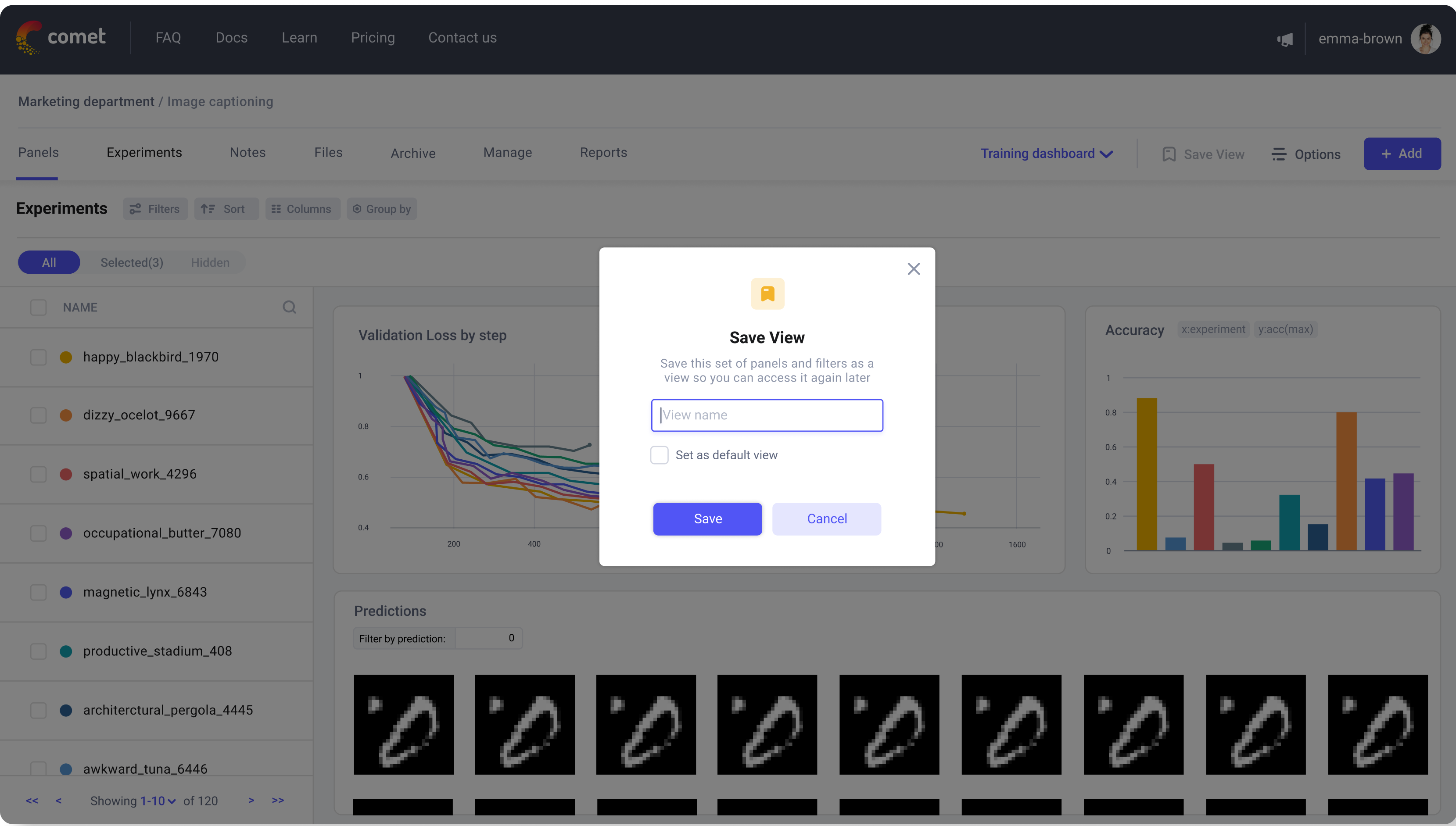

Fully Customizable Project Views

Collect your experiments in a project, where you can manage, analyze, share, and make notes on them.

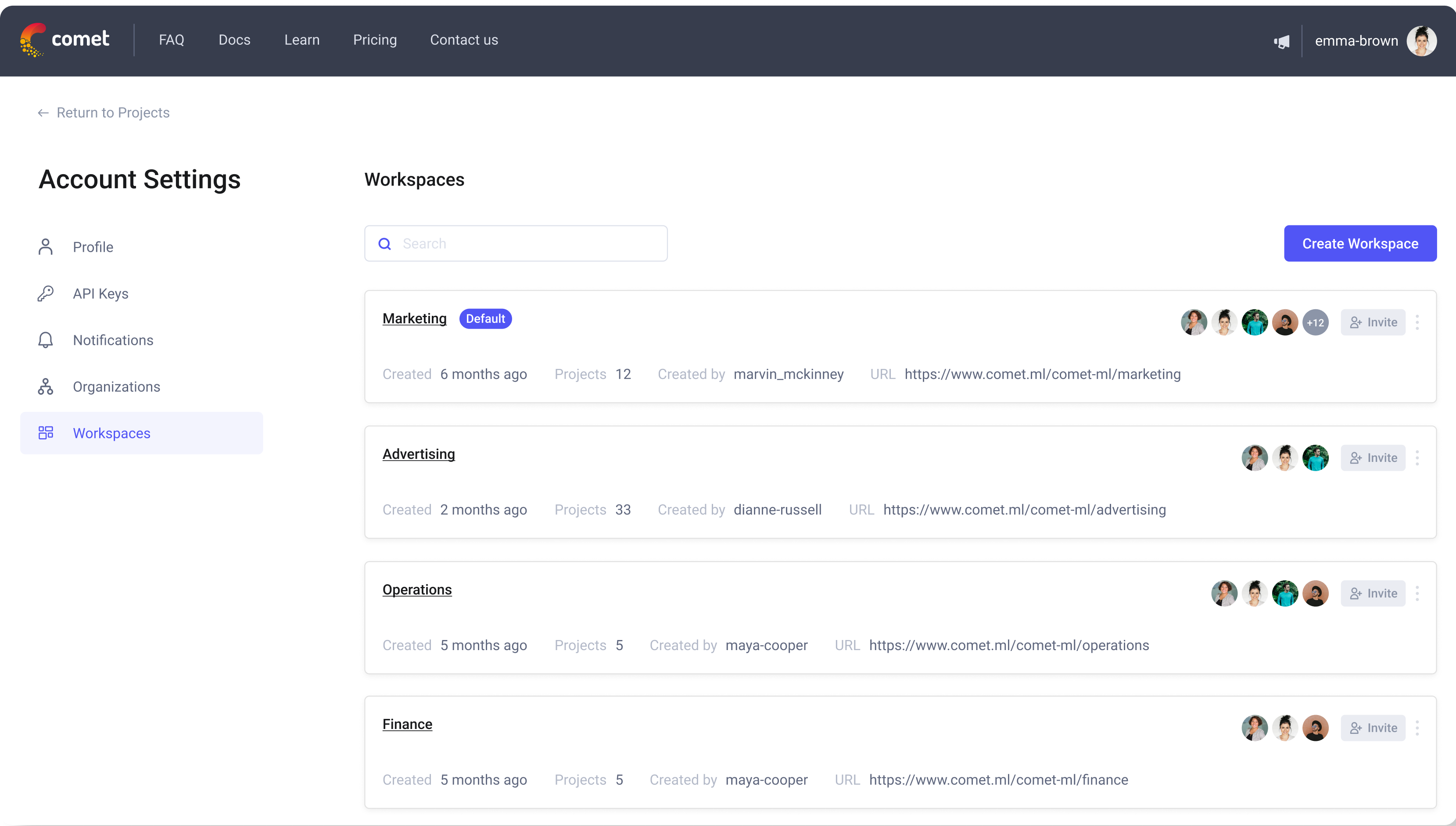

Workspaces to Collaborate

Use your workspace for personal and public projects. Create team workspaces for easy collaboration.

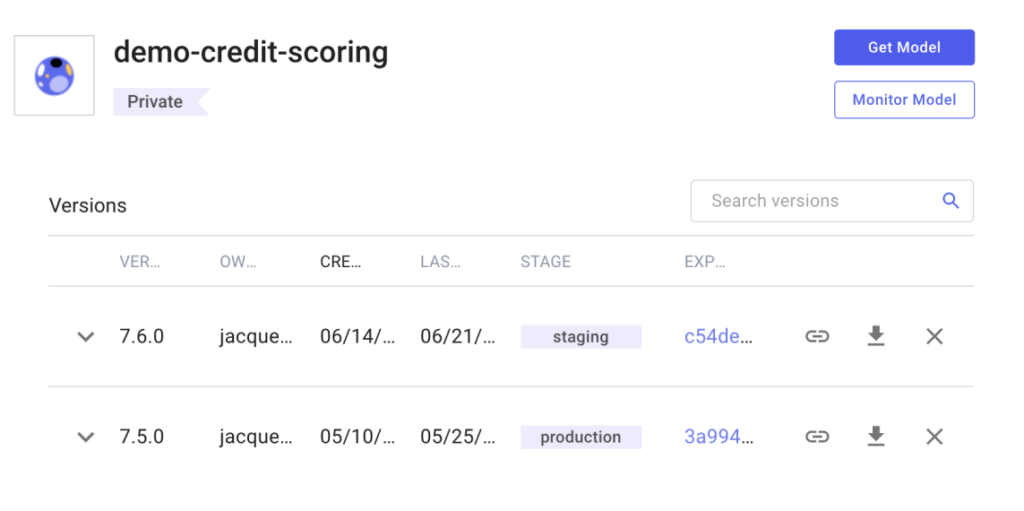

Publish Models to a Registry

Save model versions from the best experiments. Manage deployment stages with tags and webhooks.

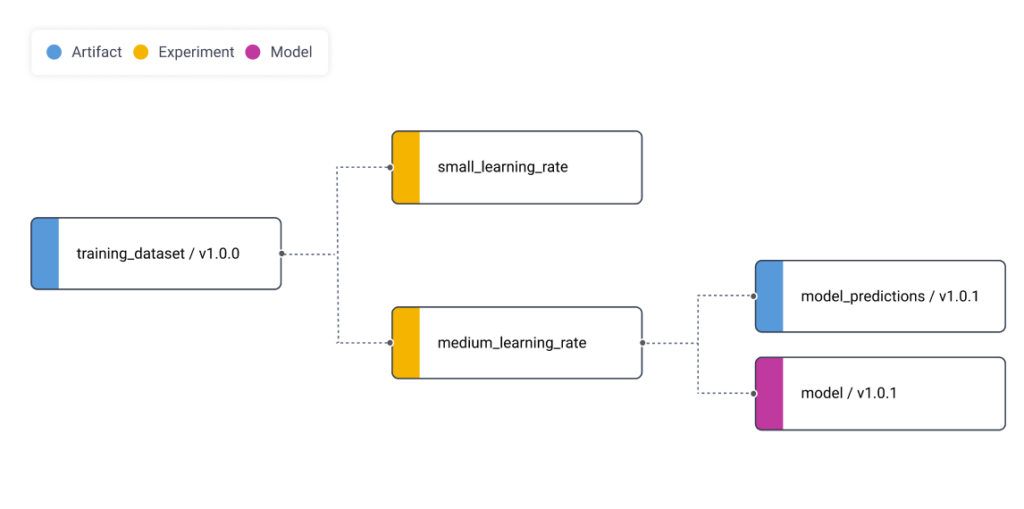

Easily track a model lifecycle and lineage from model binary, through experiment tracking to training datasets.

Compare the performance of models in production with their baselines in training.

Get started today for free.

You don’t need a credit card to sign up, and your Comet account comes with a generous free tier you can actually use—for as long as you like.