-

SelfCheckGPT for LLM Evaluation

Detecting hallucinations in language models is challenging. There are three general approaches: The problem with many LLM-as-a-Judge techniques is that…

-

LLM Juries for Evaluation

Evaluating the correctness of generated responses is an inherently challenging task. LLM-as-a-Judge evaluators have gained popularity for their ability to…

-

LLM Monitoring & Maintenance in Production Applications

Generative AI has become a transformative force, revolutionizing how businesses engage with users through chatbots, content creation, and personalized recommendations.…

-

G-Eval for LLM Evaluation

LLM-as-a-judge evaluators have gained widespread adoption due to their flexibility, scalability, and close alignment with human judgment. They excel at…

-

BERTScore For LLM Evaluation

Introduction BERTScore represents a pivotal shift in LLM evaluation, moving beyond traditional heuristic-based metrics like BLEU and ROUGE to a…

-

Building ClaireBot, an AI Personal Stylist Chatbot

Follow the evolution of my personal AI project and discover how to integrate image analysis, LLM models, and LLM-as-a-judge evaluation…

-

Perplexity for LLM Evaluation

Perplexity is, historically speaking, one of the “standard” evaluation metrics for language models. And while recent years have seen a…

-

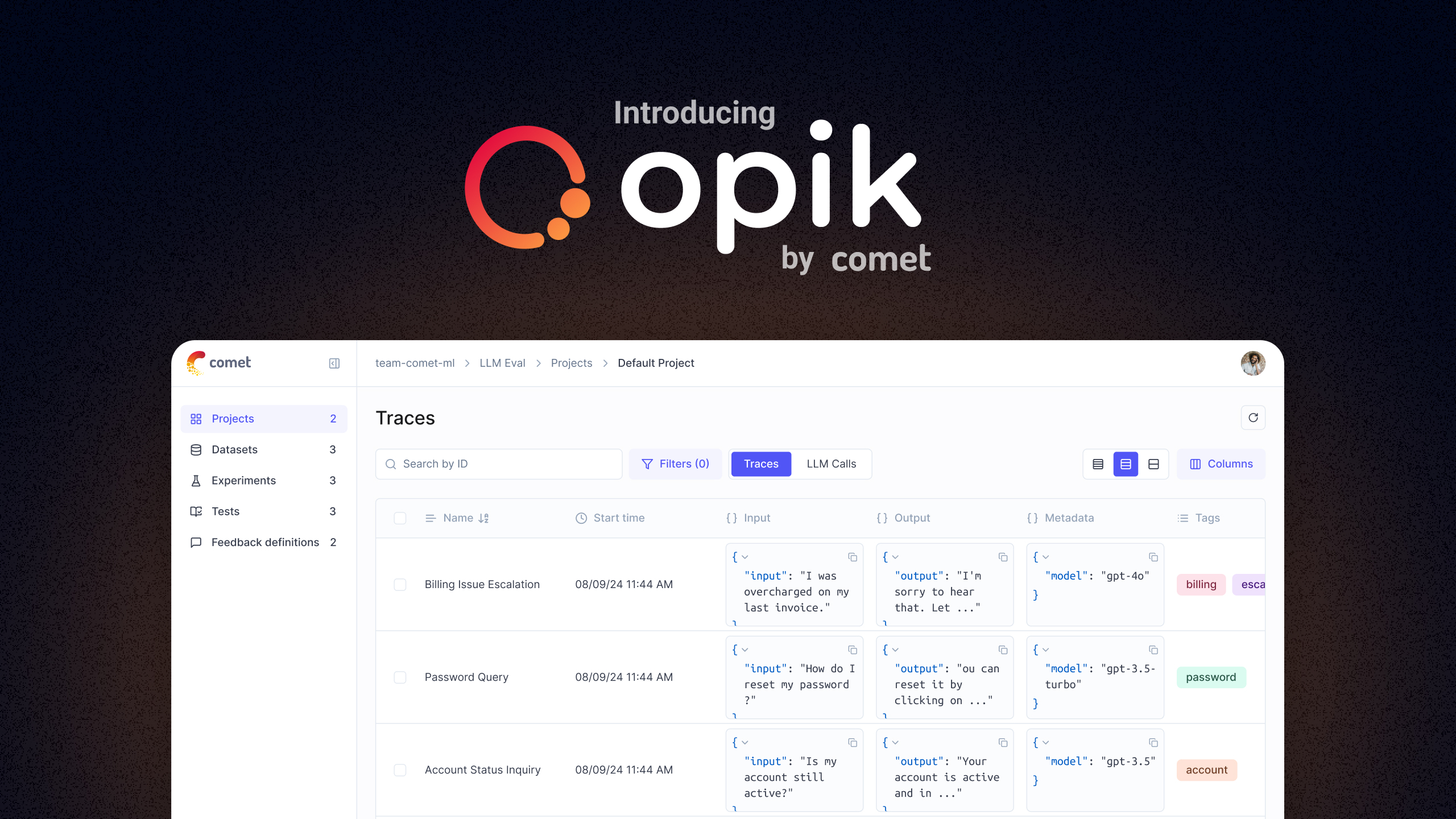

Meet Opik: Your New Tool to Evaluate, Test, and Monitor LLM Applications

Today, we’re thrilled to introduce Opik – an open-source, end-to-end LLM development platform that provides the observability tools you need…

-

Building a Low-Cost Local LLM Server to Run 70 Billion Parameter Models

A guest post from Fabrício Ceolin, DevOps Engineer at Comet. Inspired by the growing demand for large-scale language models, Fabrício…

Category: Comet Community Hub

Get started today for free.

You don’t need a credit card to sign up, and your Comet account comes with a generous free tier you can actually use—for as long as you like.