If you want to jump straight to code, head to the Getting started guide to add tracing in under five minutes.

LLM applications are more than a single API call. A typical agent involves retrieval steps, tool calls, prompt assembly, multiple LLM invocations, and post-processing — all wired together in ways that are invisible at runtime. When something goes wrong, you need to see exactly what happened at every step.

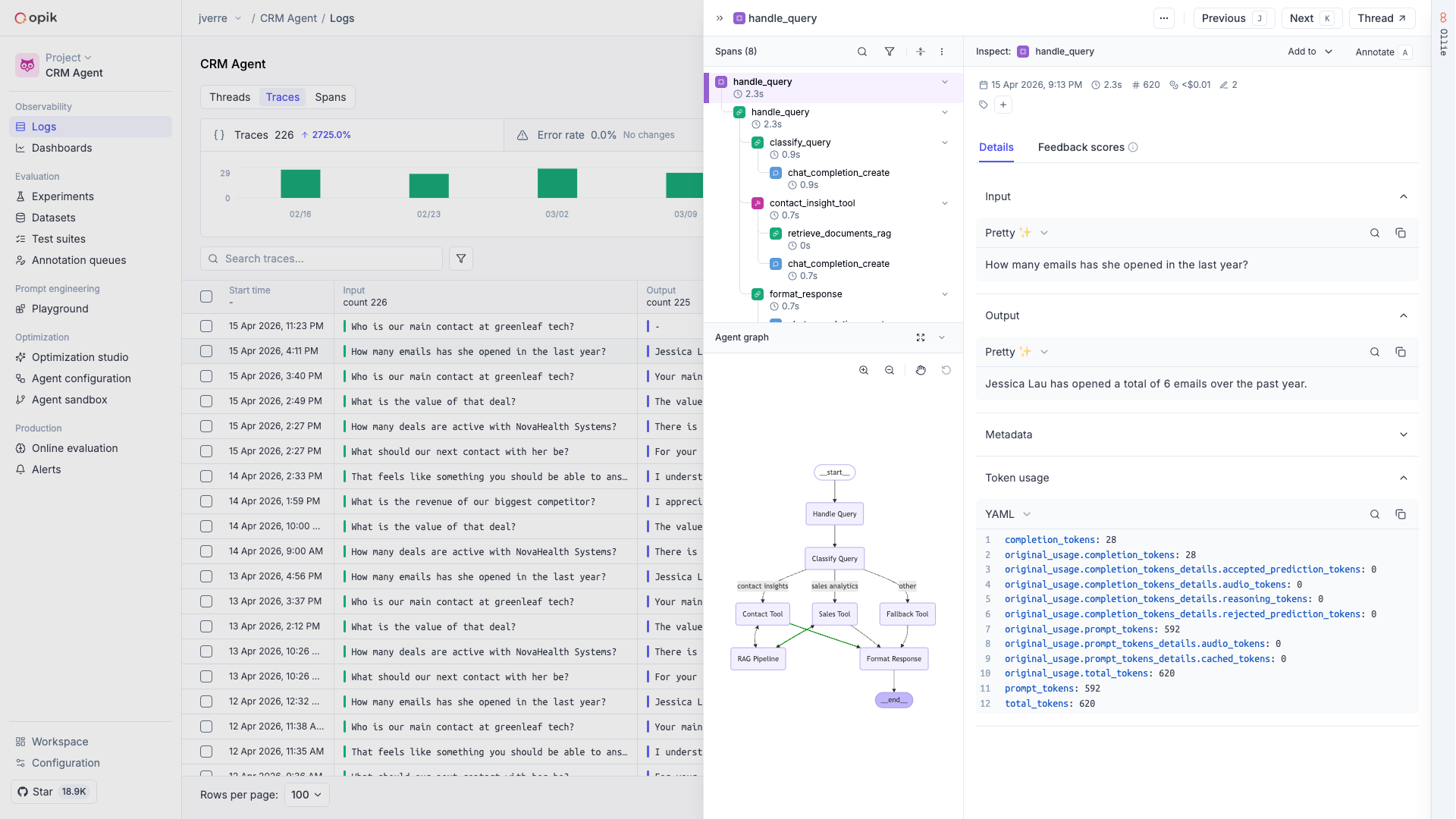

Opik gives you full visibility into every request your agent handles. Every LLM call, every tool invocation, every retrieval step is captured as a trace you can inspect, search, and analyze.

Debugging LLM applications without observability means guessing. You see the final output but not why the model hallucinated, which retrieval step returned irrelevant context, or where latency spiked.

With Opik, you can:

Full execution trees with inputs, outputs, timing, and metadata for every step

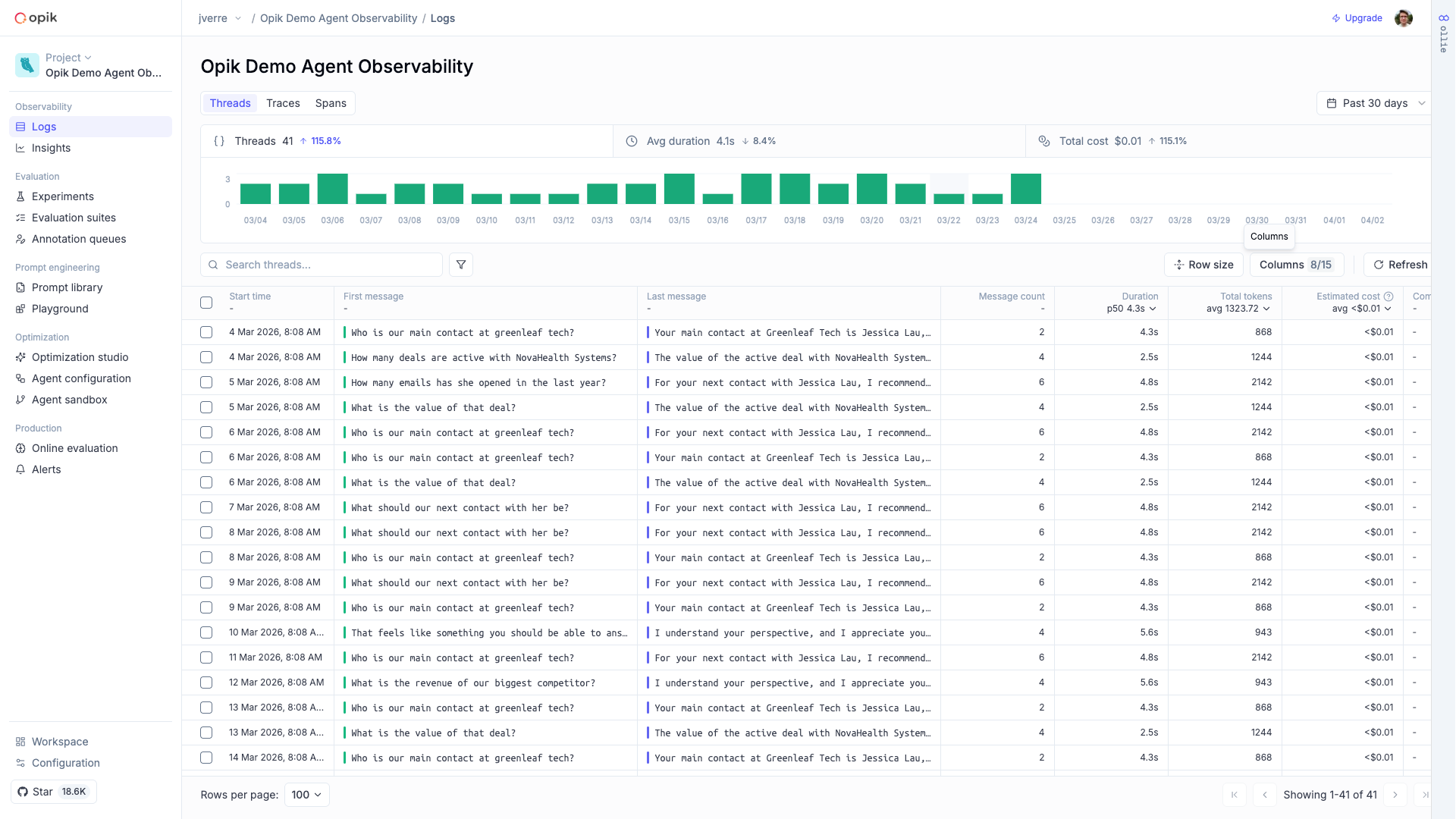

Multi-turn threads that group related traces into coherent sessions

Token usage and spending broken down by model, provider, and trace

Images, audio, video, and files logged alongside your traces

Qualitative and quantitative scores attached to individual traces

Visual execution graphs showing how your agent’s steps connect

The fastest way to add tracing is with opik-skills — install the skill and let your coding agent handle the rest:

Then ask your coding agent:

This works with Claude Code, Cursor, Codex, OpenCode, and other coding agents. You can also instrument manually with the SDK:

Every request creates a trace with detailed span-level information. You can inspect the full execution tree, see inputs and outputs at each step, and filter by duration, cost, status, or tags.

Use traces to debug failures, identify slow steps, and track quality over time. Attach feedback scores, run evaluations against datasets, and use Ollie — Opik’s AI assistant — to help root-cause issues automatically.

Opik has first-class support for 30+ frameworks in Python, TypeScript, and OpenTelemetry — so you can start capturing traces without changing how your application is built.