LLM agents fail in production in ways you can’t predict upfront. A prompt that works for 90% of queries might hallucinate on edge cases, ignore context, or produce verbose responses when users expect concise answers. Manual review doesn’t scale, and you can’t anticipate every failure mode before shipping.

You need automated regression testing — but not the kind where you sit down and write a test suite from scratch. The most effective test suites are built incrementally, from real production failures. Every time you find a bad response, you turn it into a test case. Over time, your suite becomes a comprehensive guard against the specific failure modes your agent actually encounters.

Test suites are created as you debug and improve your agent — they grow organically from real failures, not from a separate test-writing phase.

Start in the Opik dashboard. Browse traces, filter by error status or low feedback scores, and click into a trace to see the full span tree — every LLM call, tool invocation, and retrieval step with its inputs, outputs, and latencies.

Turn the failure into a test case. Add the trace to a test suite with a natural-language assertion that captures the expected behavior — for example, “The response must not hallucinate facts not present in the context”. You can do this through Ollie (Opik’s AI assistant), the UI, or the SDK.

Fix the root cause. Update a prompt via the Prompt Library, adjust tool definitions, or change retrieval parameters. Use Ollie to help diagnose the issue and suggest fixes.

Each cycle adds a new test case. Over time, your test suite becomes a comprehensive regression guard tailored to the real failure modes of your agent.

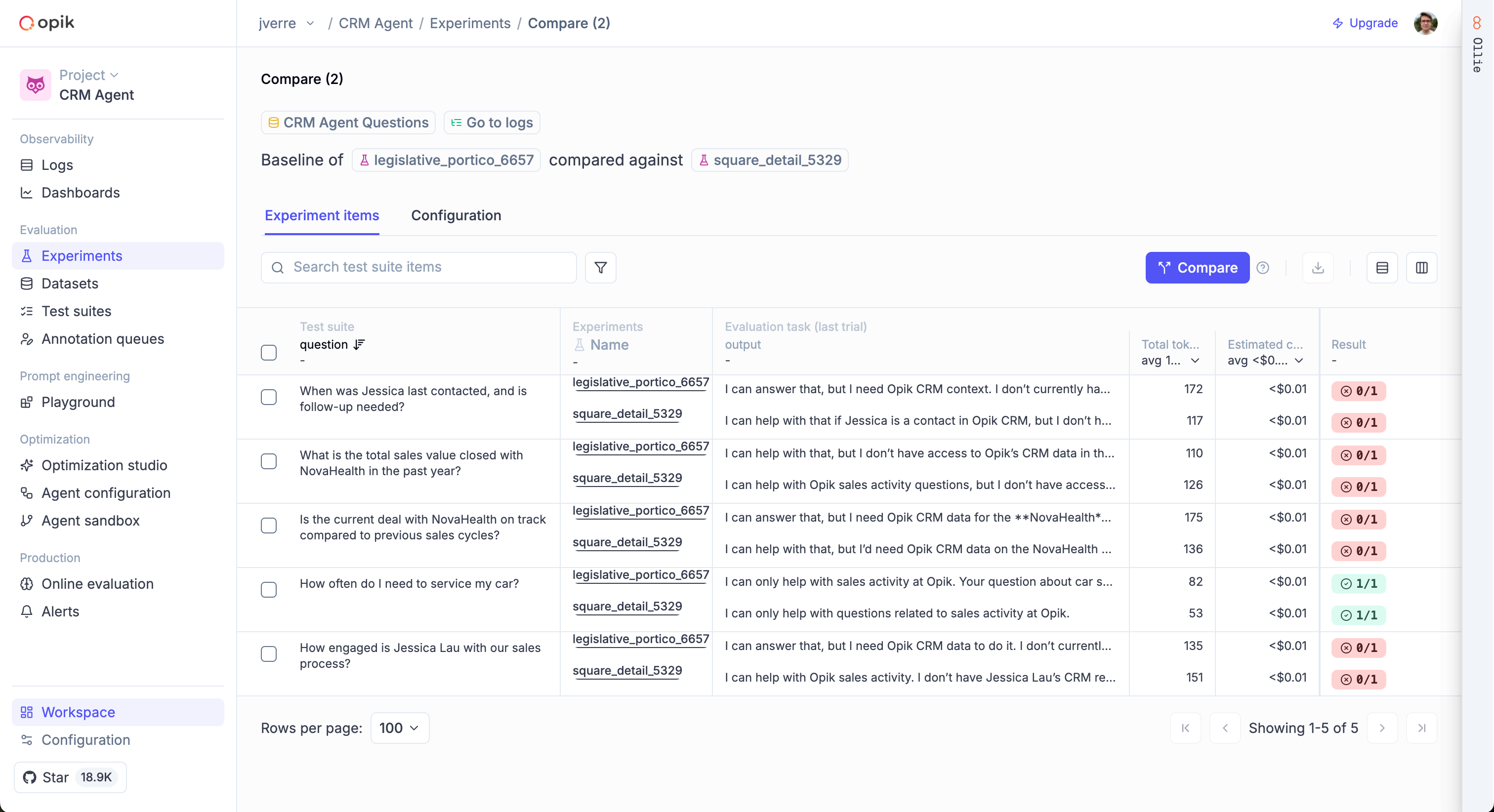

Opik provides two complementary approaches to evaluation: