This guide helps you integrate the Opik platform with your existing Agent. The goal of this guide is to help you log your first traces and start tracking your prompts and agent configuration in Opik.

Before you begin, you’ll need to choose how you want to use Opik:

Opik makes it easy to integrate with your existing LLM application. Pick the tab that matches your stack and follow the three steps to log your first trace:

If you are using the Python function decorator, you can integrate by:

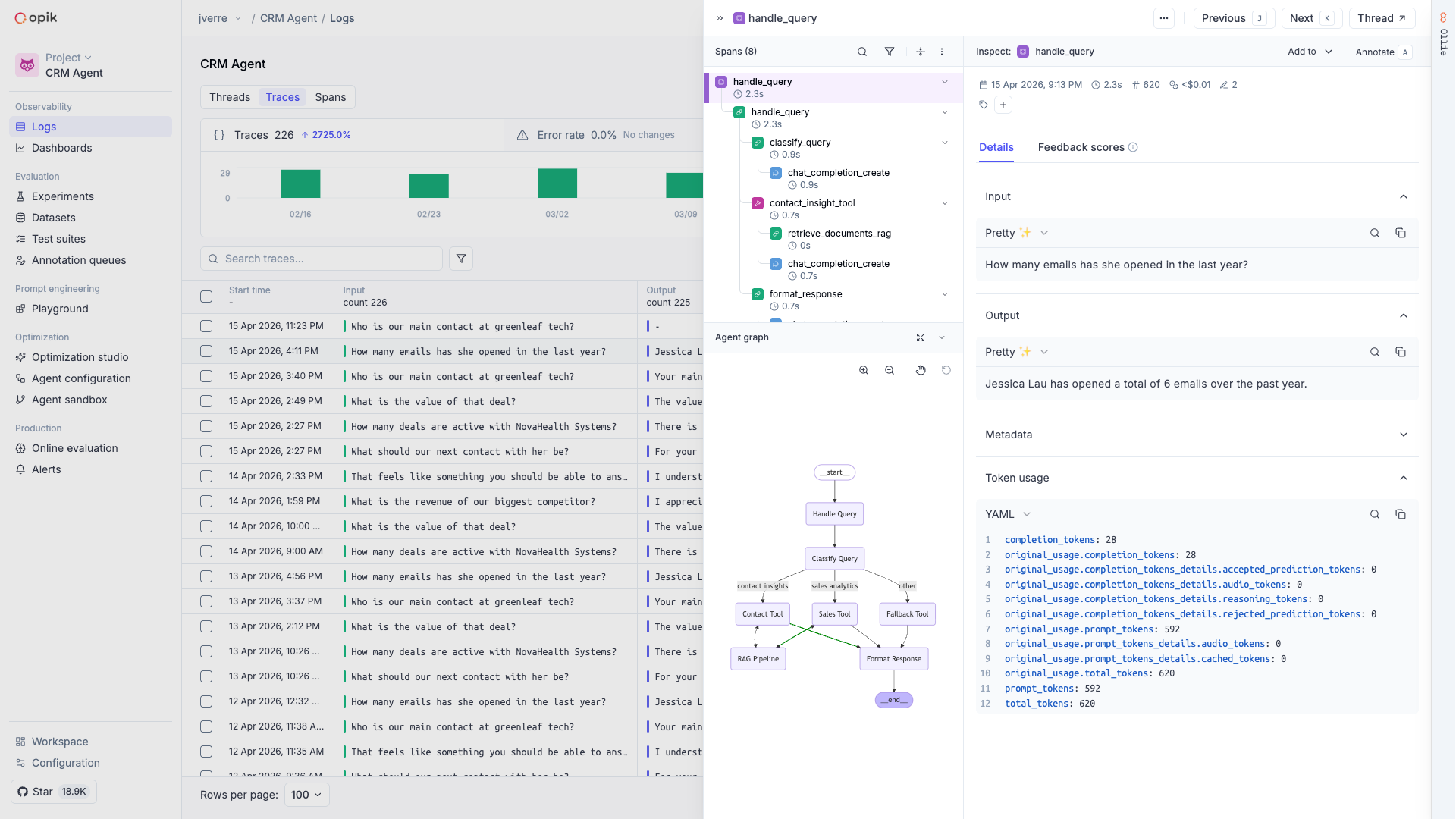

After running your application, you will start seeing your traces in Opik and you can use Ollie to analyze them and improve your agent.

If you don’t see traces appearing, reach out to us on Slack or raise an issue on GitHub and we’ll help you troubleshoot.

Now that you have logged your first traces, here’s what to explore next: