In standard software engineering, developers use proven, repeatable workflows to develop, test, debug, and update software products. They use intelligent debugging tools to quickly resolve problems, run tests to make sure fixes are effective, and automate the whole process so a fix can be implemented, tested, and integrated into the product in minutes.

The same can’t quite be said for building AI agents. To be sure, agents are software too, yet it takes a lot more manual effort to make them work reliably. That’s because agents are so much more than just code. They come with inherent challenges that make structured development harder. Their behavior is driven by multiple interacting prompts and unpredictable user inputs, not to mention the complex language models that power their intelligence. They can respond in infinitely varied ways to the same input, and are often expected to handle situations the developer may never have anticipated. This means many more failure modes that are less predictable, making issues difficult to diagnose and fix.

Agents are unstructured by design, making them incompatible with the strictly structured processes and tools used in standard software development. To make agents self-improving, we need new tools — ones that are purpose-build to handle the nuance and complexity of agents.

Our goal with Opik is to automate as much of this process as we can, so you can test and fix an agent quickly, and move on from that most recent fix with confidence.

Our Vision for Self-Improving Agents

Our vision is to make agent building as automated and straightforward as software development, making your agent self-improving. To do this, Opik builds a system around your agent to make improvements happen seamlessly.

Your agent becomes part of a continuous loop where purpose-built tools observe its behavior, diagnose problems, implement fixes, and test the results. The agent improves, but it’s the system around it grounding outcomes against a steady reference point.

Here’s what that looks like in practice:

All your development, debugging and iteration happens in one place. This is the central hub where you have everything you need to take your agent from its earliest prototype to an agent you can trust in production. It brings together your agent’s logs, test cases, and feedback alongside a powerful coding assistant that can make improvements directly to your agent’s code.

You start from scratch, describing in natural language what your agent should do, along with key implementation requirements. The coding assistant in your interface, specialized for agent development, generates a prototype of your agent for you, and shows you some responses to initial test scenarios. You give feedback, it implements a change, writes a new test case to cover it, and runs a regression test to make sure nothing else broke. The process continues with your guidance only where needed.

As you develop, each mistake your agent makes leads to an automatic improvement and a new test in your test suite. Meanwhile, the interface learns your agent and what you expect from it. Before long, it starts identifying problems on its own, autonomously testing scenarios, reviewing logs, finding issues, and suggesting fixes for you to approve.

You no longer need to manually hunt for problems and implement fixes. Your development interface helps your agent improve on its own, with every change backed by a test that makes sure it sticks.

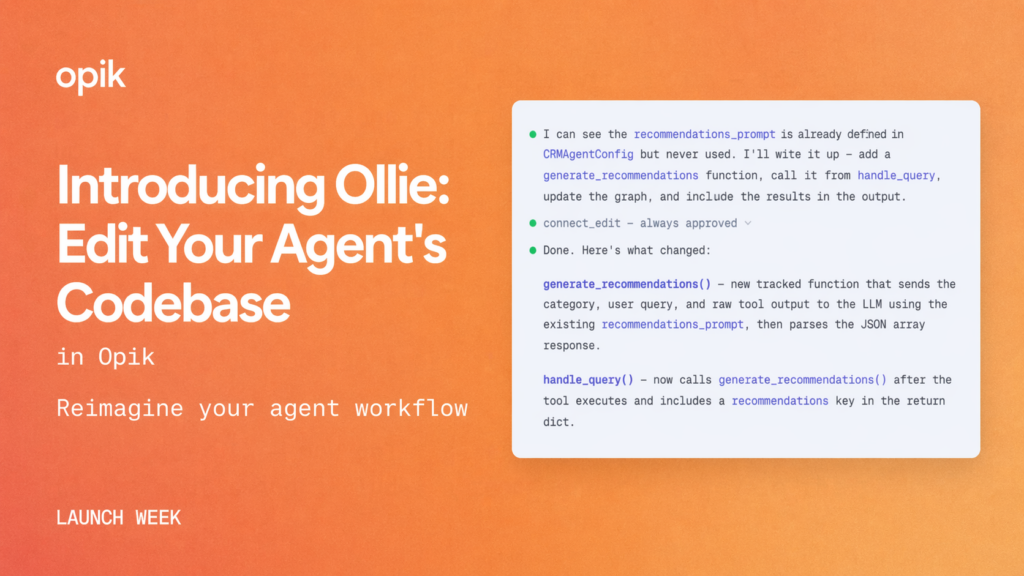

Introducing Ollie: The First Step

Ollie is a powerful coding assistant built right into the Opik platform. With full access to your agent’s logs and test suites, it can access your code, run your agent, and make changes directly, closing the loop between observability and action.

Once you have a prototype for your agent, connect it to Opik. From there, Ollie works alongside you across the full development cycle. You can use it to:

Instrument your agent for observability. Ollie can automatically set up tracing in your agent’s code so that every run is logged to the platform. This is the key first step to empower it with the context needed to improve your agent.

Analyze traces and navigate the platform on your behalf. Ask Ollie to search through your agent’s activity, filter traces, and surface patterns. It has full access to the Opik UI and can navigate it autonomously, creating views, pulling up specific runs, and running its own analysis to help you understand what’s happening.

Diagnose problems and fix them directly in your code. When you spot an issue (or Ollie surfaces one), it can diagnose the root cause, implement the fix in your codebase, and generate a new test case to prevent future regressions.

Generate test cases and assertions, and run your test suites. Ollie can build and manage your test coverage directly. Ask it to generate test suite items, write assertions, and run your full test suite on demand.

When you use Ollie to improve your agent, you get more than a standalone coding assistant. Ollie already has the full context of your agent’s activity and is specialized for agent development. It works inside the platform where all of your agent’s data lives, and it’s linked to the codebase where your agent runs. It goes from spotting a problem in your traces to implementing a fix in your code to writing a test. It’s all part of a single cohesive workflow, scaffolded by comprehensive observability and structured evaluation suites

We’re building toward a future where agent development is as automated and disciplined as software development. Ollie is how we start making that real.

Getting Started with Ollie

Ollie is free to try on Opik Cloud and is part of Opik Enterprise for self-hosted deployments. You can use it inside Opik to analyze traces, pinpoint issues, and figure out fixes — but the real power comes when you connect it to your codebase to pair your local project with your Opik workspace. Here’s how to set it up. Opik’s core observability and testing capabilities (including the new Test Suites) remain fully open source.