| 1 | ## First we will configure the OpenTelemetry |

| 2 | from opentelemetry import trace |

| 3 | from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter |

| 4 | from opentelemetry.sdk.resources import Resource |

| 5 | from opentelemetry.sdk.trace import TracerProvider |

| 6 | from opentelemetry.sdk.trace.export import BatchSpanProcessor |

| 7 | from opentelemetry.instrumentation.openai import OpenAIInstrumentor |

| 8 | from opentelemetry.instrumentation.threading import ThreadingInstrumentor |

| 9 | |

| 10 | |

| 11 | def setup_telemetry(): |

| 12 | """Configure OpenTelemetry with HTTP exporter""" |

| 13 | # Create a resource with service name and other metadata |

| 14 | resource = Resource.create( |

| 15 | { |

| 16 | "service.name": "ag2-demo", |

| 17 | "service.version": "1.0.0", |

| 18 | "deployment.environment": "development", |

| 19 | } |

| 20 | ) |

| 21 | |

| 22 | # Create TracerProvider with the resource |

| 23 | provider = TracerProvider(resource=resource) |

| 24 | |

| 25 | # Create BatchSpanProcessor with OTLPSpanExporter |

| 26 | processor = BatchSpanProcessor(OTLPSpanExporter()) |

| 27 | provider.add_span_processor(processor) |

| 28 | |

| 29 | # Set the TracerProvider |

| 30 | trace.set_tracer_provider(provider) |

| 31 | |

| 32 | tracer = trace.get_tracer(__name__) |

| 33 | |

| 34 | # Instrument OpenAI calls |

| 35 | OpenAIInstrumentor().instrument(tracer_provider=provider) |

| 36 | |

| 37 | # AG2 calls OpenAI in background threads, propagate the context so all spans ends up in the same trace |

| 38 | ThreadingInstrumentor().instrument() |

| 39 | |

| 40 | return tracer, provider |

| 41 | |

| 42 | |

| 43 | # 1. Import our agent class |

| 44 | from autogen import ConversableAgent, LLMConfig |

| 45 | |

| 46 | # 2. Define our LLM configuration for OpenAI's GPT-4o mini |

| 47 | # uses the OPENAI_API_KEY environment variable |

| 48 | llm_config = LLMConfig(api_type="openai", model="gpt-4o-mini") |

| 49 | |

| 50 | # 3. Create our LLM agent within the parent span context |

| 51 | with llm_config: |

| 52 | my_agent = ConversableAgent( |

| 53 | name="helpful_agent", |

| 54 | system_message="You are a poetic AI assistant, respond in rhyme.", |

| 55 | ) |

| 56 | |

| 57 | |

| 58 | def main(message): |

| 59 | response = my_agent.run(message=message, max_turns=2, user_input=True) |

| 60 | |

| 61 | # 5. Iterate through the chat automatically with console output |

| 62 | response.process() |

| 63 | |

| 64 | # 6. Print the chat |

| 65 | print(response.messages) |

| 66 | |

| 67 | return response.messages |

| 68 | |

| 69 | |

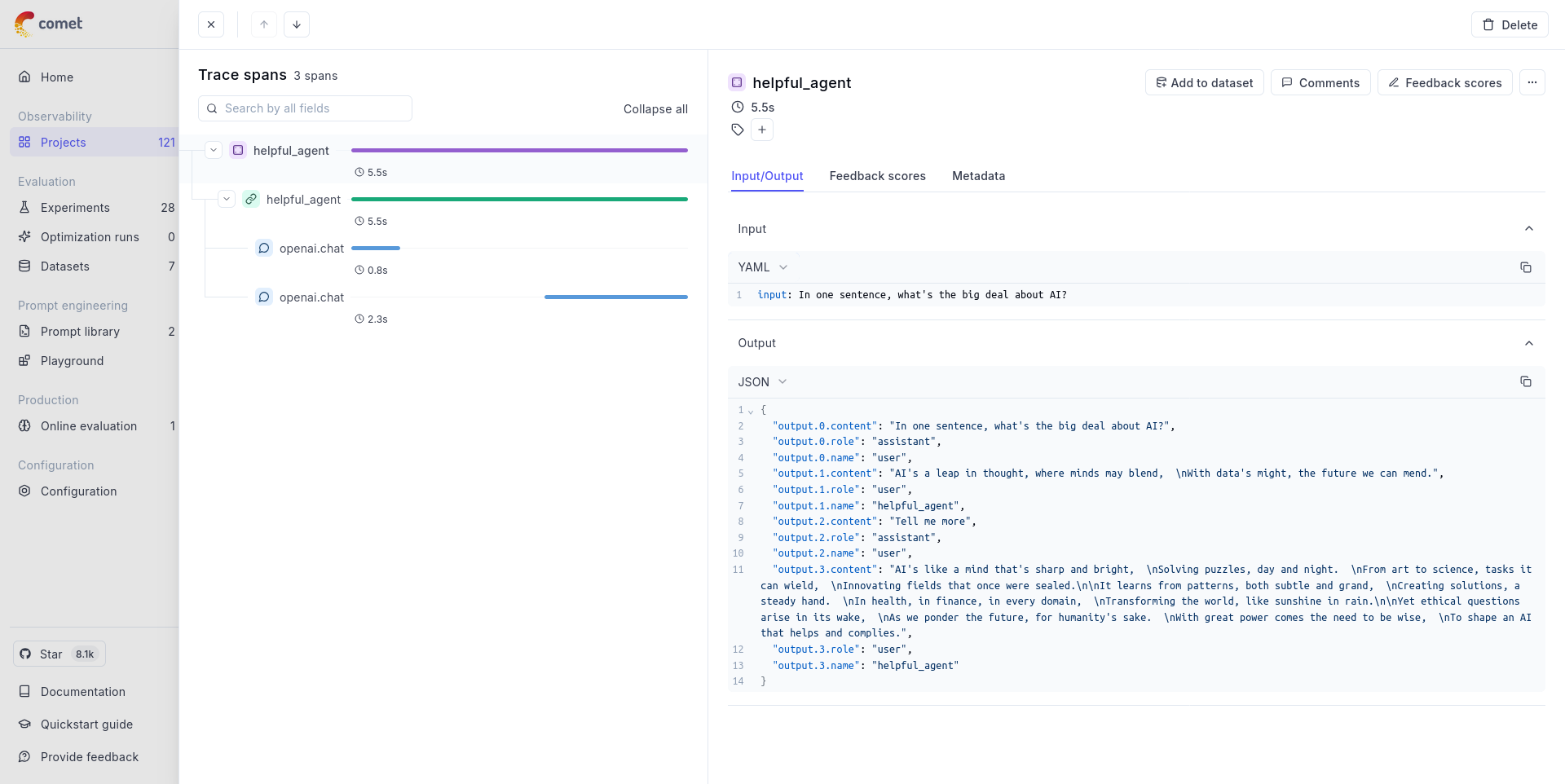

| 70 | if __name__ == "__main__": |

| 71 | tracer, provider = setup_telemetry() |

| 72 | |

| 73 | # 4. Run the agent with a prompt |

| 74 | with tracer.start_as_current_span(my_agent.name) as agent_span: |

| 75 | message = "In one sentence, what's the big deal about AI?" |

| 76 | |

| 77 | agent_span.set_attribute("input", message) # Manually log the question |

| 78 | |

| 79 | response = main(message) |

| 80 | |

| 81 | # Manually log the response |

| 82 | agent_span.set_attribute("output", response) |

| 83 | |

| 84 | # Force flush all spans to ensure they are exported |

| 85 | provider = trace.get_tracer_provider() |

| 86 | provider.force_flush() |