Observability for Anthropic with Opik

Anthropic is an AI safety and research company that’s working to build reliable, interpretable, and steerable AI systems.

This guide explains how to integrate Opik with the Anthropic Python SDK. By using the track_anthropic method provided by opik, you can easily track and evaluate your Anthropic API calls within your Opik projects as Opik will automatically log the input prompt, model used, token usage, and response generated.

Account Setup

Comet provides a hosted version of the Opik platform, simply create an account and grab your API Key.

You can also run the Opik platform locally, see the installation guide for more information.

Getting Started

Installation

To start tracking your Anthropic LLM calls, you’ll need to have both the opik and anthropic packages. You can install them using pip:

Configuring Opik

Configure the Opik Python SDK for your deployment type. See the Python SDK Configuration guide for detailed instructions on:

- CLI configuration:

opik configure - Code configuration:

opik.configure() - Self-hosted vs Cloud vs Enterprise setup

- Configuration files and environment variables

Configuring Anthropic

In order to configure Anthropic, you will need to have your Anthropic API Key set. You can find or create your Anthropic API Key in this page.

You can set it as an environment variable:

Or set it programmatically:

Logging LLM calls

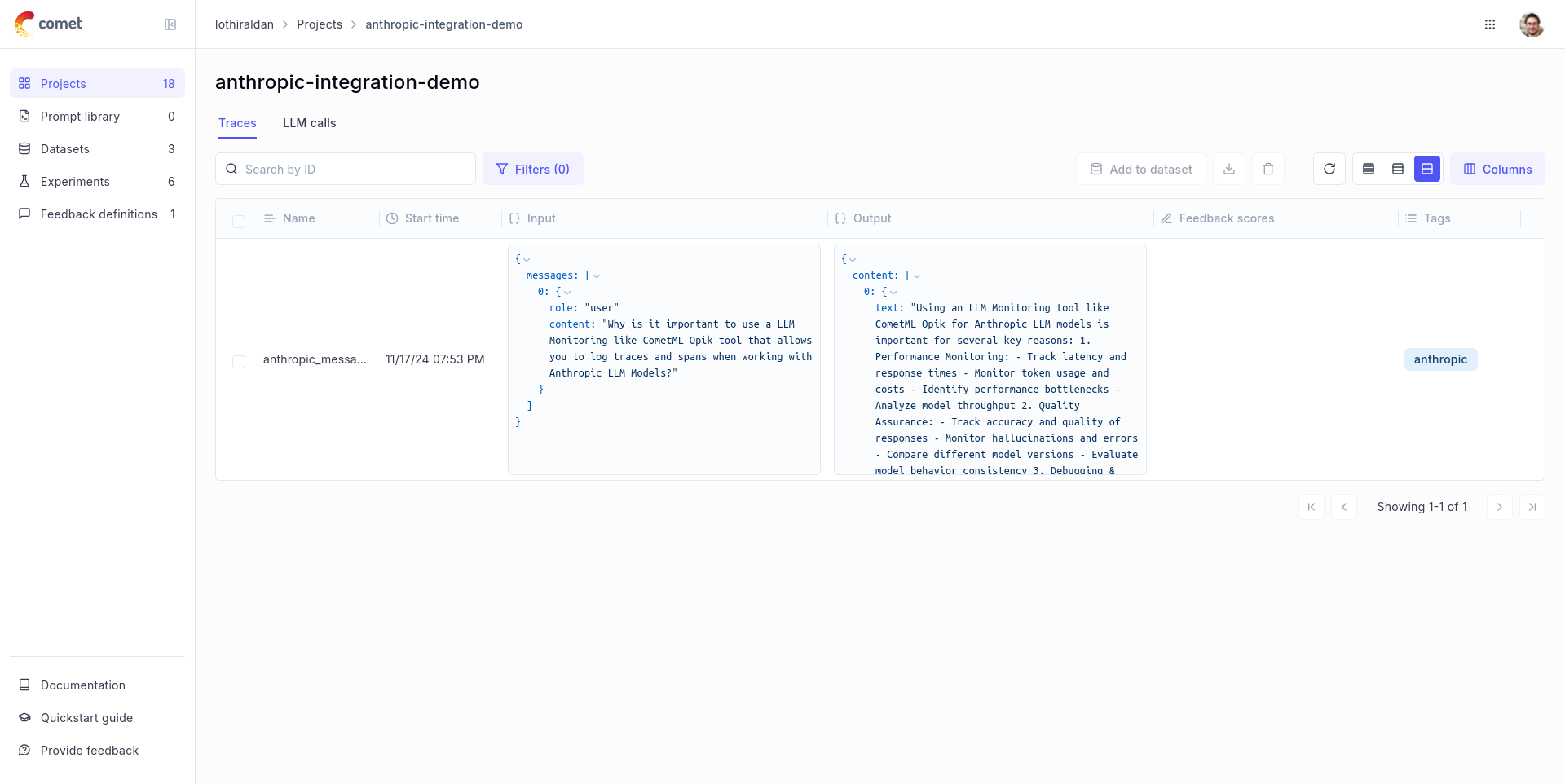

In order to log the LLM calls to Opik, you will need to create the wrap the anthropic client with track_anthropic. When making calls with that wrapped client, all calls will be logged to Opik:

Advanced Usage

Using with the @track decorator

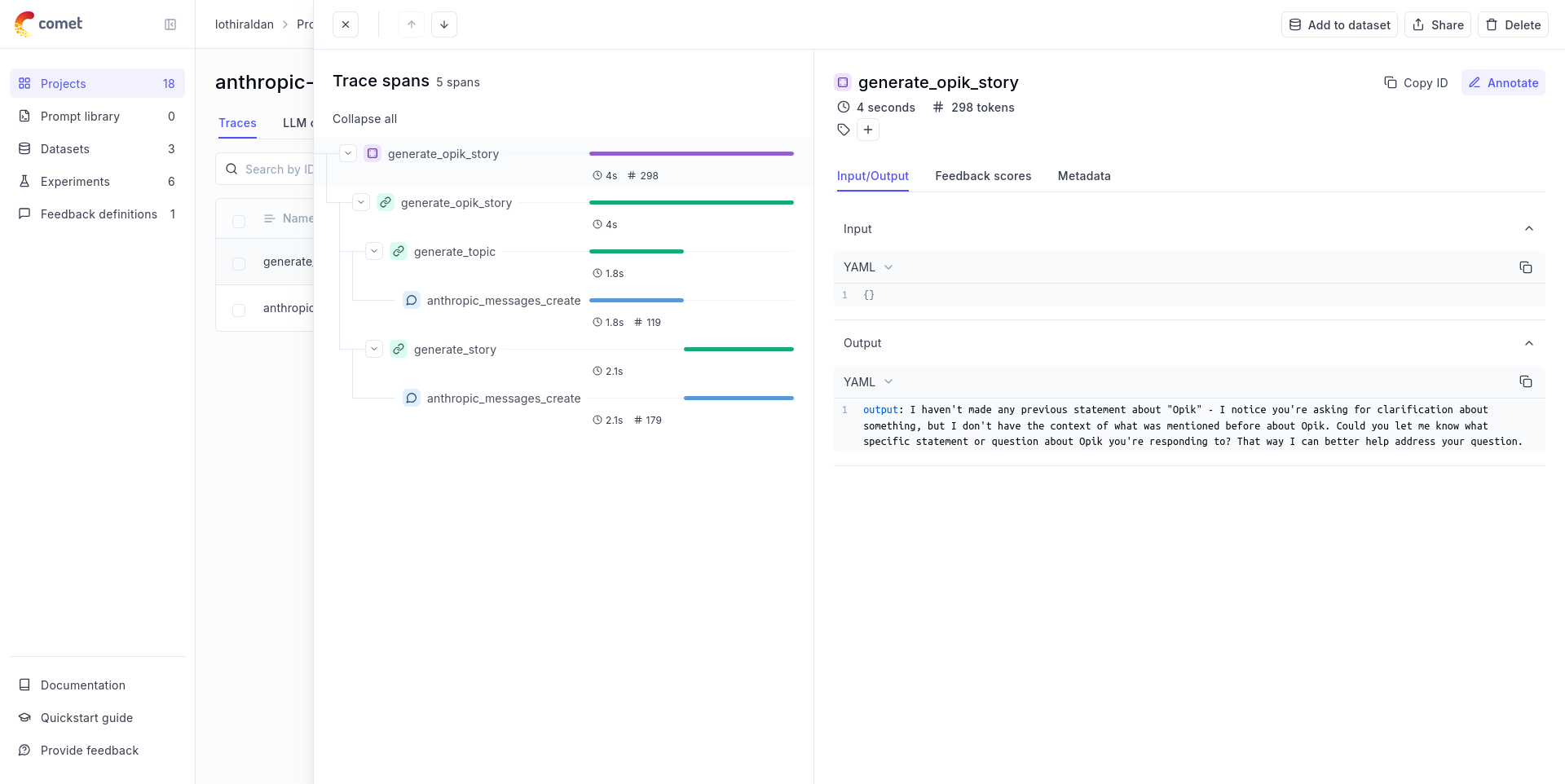

If you have multiple steps in your LLM pipeline, you can use the @track decorator to log the traces for each step. If Anthropic is called within one of these steps, the LLM call will be associated with that corresponding step:

The trace can now be viewed in the UI with hierarchical spans showing the relationship between different steps:

Cost Tracking

The track_anthropic wrapper automatically tracks token usage and cost for all supported Anthropic models.

Cost information is automatically captured and displayed in the Opik UI, including:

- Token usage details

- Cost per request based on Anthropic pricing

- Total trace cost

View the complete list of supported models and providers on the Supported Models page.