Observability for BeeAI (TypeScript) with Opik

Observability for BeeAI (TypeScript) with Opik

BeeAI is an agent framework designed to simplify the development of AI agents with a focus on simplicity and performance. It provides a clean API for building agents with built-in support for tool usage, conversation management, and extensible architecture.

BeeAI’s primary advantage is its lightweight design that makes it easy to create and deploy AI agents without unnecessary complexity, while maintaining powerful capabilities for production use.

Getting started

To use the BeeAI integration with Opik, you will need to have BeeAI and the required OpenTelemetry packages installed.

Installation

Option 1: Using npm

Option 2: Using yarn

Version Compatibility: The BeeAI instrumentation currently supports beeai-framework version 0.1.13. Using a newer version may cause compatibility issues.

Requirements

- Node.js ≥ 18

- BeeAI Framework (

beeai-framework) - OpenInference Instrumentation for BeeAI (

@arizeai/openinference-instrumentation-beeai) - OpenTelemetry SDK for Node.js (

@opentelemetry/sdk-node)

Environment configuration

Configure your environment variables based on your Opik deployment:

Intent: Route BeeAI OpenInference spans to Opik through OTLP. No breaking changes: existing BeeAI setups continue to work; these settings are only required to send traces to Opik.

Applies when:

You run BeeAI (TypeScript) with @arizeai/openinference-instrumentation-beeai.

Required fields:

OTEL_EXPORTER_OTLP_ENDPOINTOTEL_EXPORTER_OTLP_HEADERS(Authorization,Comet-Workspacefor Cloud/Enterprise)

Optional fields:

projectNameinOTEL_EXPORTER_OTLP_HEADERS(recommended)- additional OpenTelemetry env vars for sampling/resource labels

Minimal valid input:

- endpoint for your deployment mode

- matching headers for auth and routing

Opik Cloud

Enterprise deployment

Self-hosted instance

To log the traces to a specific project, you can add the

projectName parameter to the OTEL_EXPORTER_OTLP_HEADERS

environment variable:

You can also update the Comet-Workspace parameter to a different

value if you would like to log the data to a different workspace.

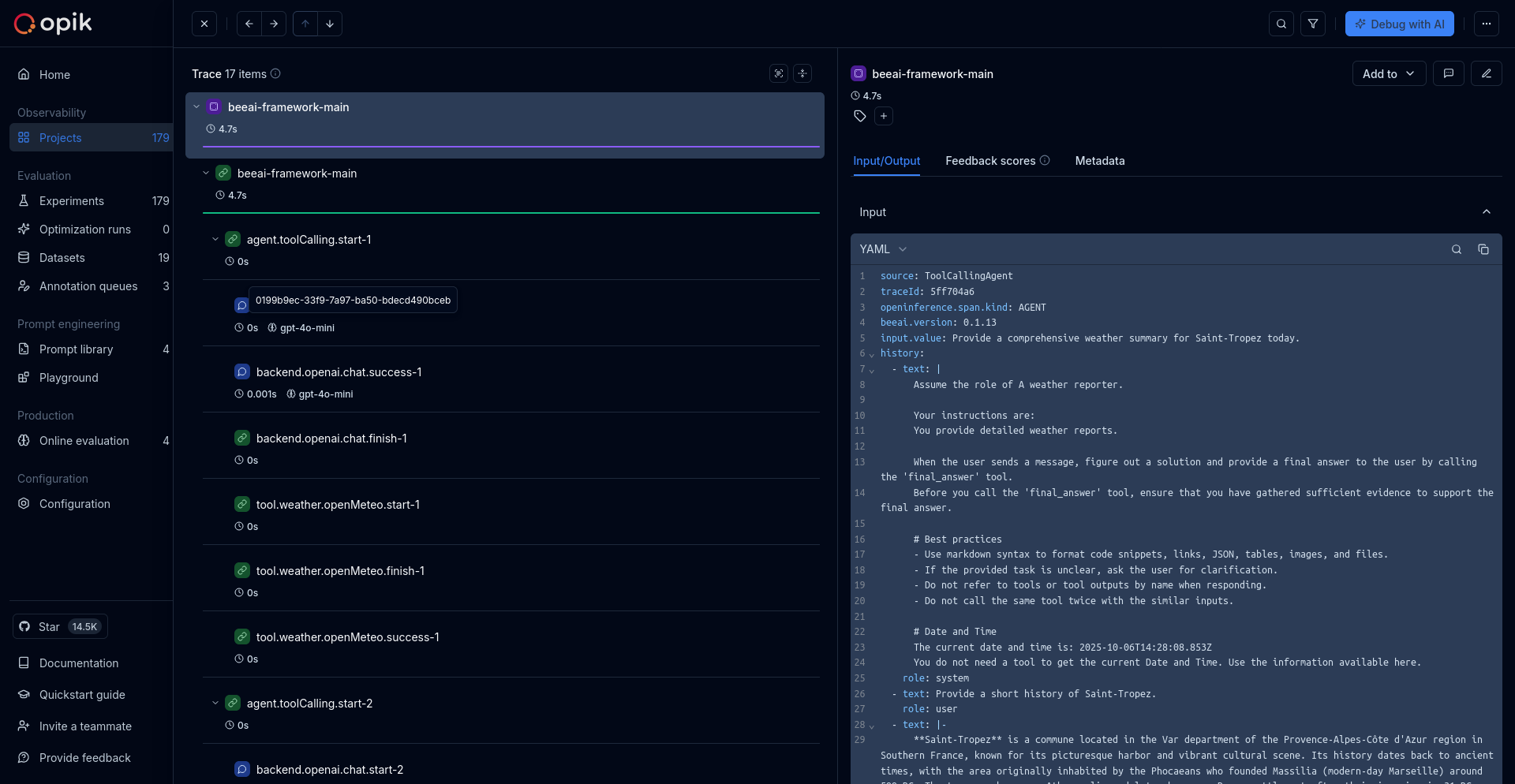

Using Opik with BeeAI

Set up OpenTelemetry instrumentation for BeeAI:

Validation

- Start the app and run one agent request.

- Confirm OTLP export succeeds.

- Verify the trace in Opik under the expected workspace/project.

Source references

- BeeAI framework

- OpenInference BeeAI instrumentation

- OpenTelemetry JS OTLP exporter

- Opik OpenTelemetry overview

Further improvements

If you have any questions or suggestions for improving the BeeAI integration, please open an issue on our GitHub repository.