Integrate with Vertex AI¶

Comet integrates with Google Vertex AI.

Google Vertex AI lets you build, deploy, and scale ML models faster, with pre-trained and custom tooling within a unified artificial intelligence platform.

Visualize Vertex AI Pipelines¶

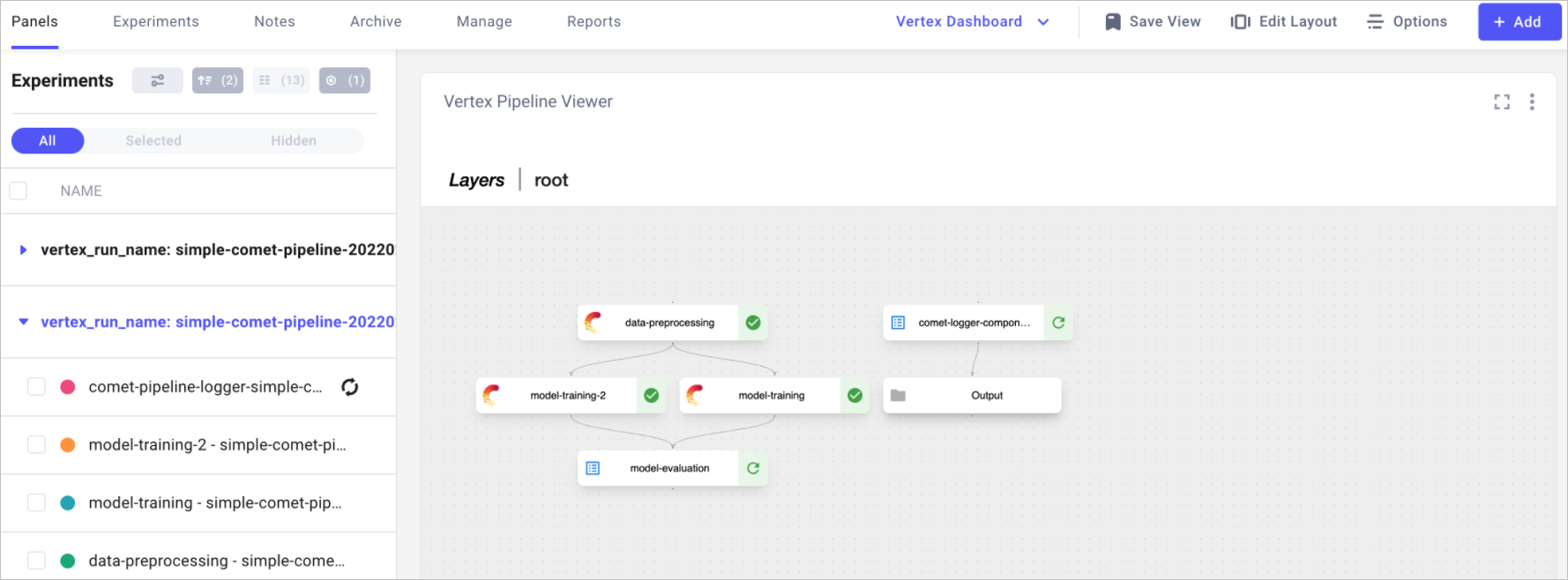

Comet partnered with Vertex AI to allow you to track not just individual training runs but also Vertex Pipelines within the Comet UI.

The state of the pipeline can be visualized using the Vertex AI Pipeline Panel available in the Featured tab.

You can recreate the view above by:

- Grouping Experiments by

vertex_run_name. - Adding the Vertex AI Pipelines panel available in the Featured tab.

- Saving the view as a Vertex dashboard.

The Vertex AI Pipelines can be used to either visualize the latest state of the DAG or the static graph -- similar to what is available in the Vertex AI Pipelines UI. In addition, all tasks that have the Comet logo are also tracked in Comet and can be accessed by clicking the task.

Integrate in two steps¶

- Add a Comet logger component to track the state of the pipeline.

- Add Comet to each individual tasks that you would like to track as a Comet Experiment.

Log the state of a pipeline¶

The Vertex AI Pipelines integration relies on a component that runs in parallel to the rest of the pipeline. The comet_logger_component logs the state of the pipeline as a whole and reports it back to the Comet UI, allowing you to track the pipeline progress as a whole. This component can be used like this:

import comet_ml.integration.vertex

import kfp.dsl as dsl

@dsl.pipeline(name="ML training pipeline")

def ml_training_pipeline():

# Create a Vertex Logger

logger = comet_ml.integration.vertex.CometVertexPipelineLogger(

api_key="<>", workspace="<>", project_name="<>"

)

# Rest of the training pipeline

# Wrap each task of your pipeline with track_task to track them with Comet

task = logger.track_task(model_training_op())

The Comet Vertex logger can also be configured using environment variables, in which case you will not need to specify the api_key, workspace or project_name arguments.

Log automatically

The Comet Vertex logger creates an Experiment when the Vertex pipeline is compiled into JSON. Through the Experiment, the Vertex pipeline code and all of the other environment details listed here are logged automatically. In addition the following Vertex-specific information is logged:

| Item Name | Item type | Description |

|---|---|---|

| vertex_run_name | Other | The Vertex pipeline name |

| vertex_run_id | Other | The Vertex unique run id |

| vertex_task_type | Other | Internal field used to distinguish between task and pipeline Experiments |

| pipeline_type | Other | Internal field used to distinguish between integrations |

| vertex-pipeline | Asset | The status of the Vertex pipeline |

If you want to have more control over what is logged automatically or want to log additional information, you can create your own Experiment and pass it to the Comet logger component, like this:

import comet_ml

import comet_ml.integration.vertex

import kfp.dsl as dsl

@dsl.pipeline(name="ML training pipeline")

def ml_training_pipeline():

# Your own experiment object

experiment = comet_ml.start(api_key="<>", workspace="<>", project_name="<>")

# Log an additional code file

experiment.log_code("./utils/helper.py")

# Log additional information

trigger_reason = os.environ.get("TRIGGER_REASON", "Manual trigger")

experiment.log_other("Trigger reason", trigger_reason)

# Add the Comet logger component

logger = comet_ml.integration.vertex.CometVertexPipelineLogger(

custom_experiment=experiment

)

# Flush all of the information

experiment.end()

# Rest of the training pipeline

Log the state of each task¶

Create an Experiment object inside each Vertex component that you want to track. If you wrapped the Vertex task with CometVertexPipelineLogger.track_task, all of the Vertex metadata will be automatically logged in that Experiment.

@kfp.v2.dsl.component(packages_to_install=["comet_ml"])

def my_component() -> None:

import comet_ml

experiment = comet_ml.start(api_key="<>")

# Rest of the task code

Log automatically

The Experiment automatically logs all of the other environment details listed here. In addition, the following Vertex-specific information is logged:

| Item Name | Item type | Description |

|---|---|---|

| vertex_run_name | Other | The Vertex pipeline name |

| vertex_task_id | Other | The Vertex unique task id |

| vertex_task_name | Other | The Vertex task name |

| vertex_task_type | Other | Internal field used to distinguish between task and pipeline Experiments |

Note

There are alternatives to setting the API key programatically. See more here.