Search & Export LLM prompts and chains¶

The Prompts page gives you access to all logged prompts and chains for interactive analysis of your prompt engineering workflows from the Comet UI.

For programmatic search and export of your LLM prompts and chains, the comet-llm Python SDK offers two objects that allow you to interact with your LLM prompts: the API object and the LLMTraceAPI object.

Below we provide information and instructions on how to search and export your LLM traces.

Note

comet-llm refers to any logged prompt and chain, with associated metadata and other attributes, as a "trace".

Get LLM traces¶

You can search through your LLM prompts and chains by using the API methods for getting and querying the logged traces.

Note

The LLMTraceAPI object is returned by the API object methods only, and cannot be instantiated directly!

Get a trace by key¶

The safest way to access a single trace is using the API.get_llm_trace_by_key() method.

You can get a trace key in three ways:

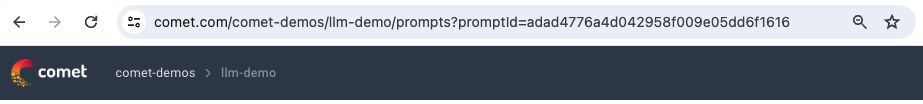

From the Prompts table in the Comet UI, select any prompt or chain and find the id from the URL.

For example:

The URL of an example prompt/chain selected in the Prompts table The key (or id) of the selected prompt in the Prompts table is "adad4776a4d042958f009e05dd6f1616". You can use this key to programmatically get a trace as follows:

1 2 3 4 5 6 7 8 9 10 11

import comet_llm # Initialize the CometLLM SDK comet_llm.init() # Create a Comet LLM API object llm_api = comet_llm.API() # Fetch a trace by key trace_key = "adad4776a4d042958f009e05dd6f1616" trace = llm_api.get_llm_trace_by_key(trace_key)For a logged prompt or chain in the session, access the id attribute of the object instance.

For example:

1 2 3 4 5 6 7 8 9 10 11 12 13 14

import comet_llm # Initialize the CometLLM SDK comet_llm.init() # Log a prompt (or get the prompt key from a previously logged prompt from Comet UI) logged_prompt = comet_llm.log_prompt(prompt="Hello", output="World!") # Create a Comet LLM API object llm_api = comet_llm.API() # Fetch a trace by key trace_key = logged_prompt.id trace = llm_api.get_llm_trace_by_key(trace_key)Get a trace by name, and then use the

LLMTraceAPI.get_key()method.

Note

It is not required to specify project and workspace when accessing traces by key since the key is unique across all projects and workspaces for the user.

Get a trace by name¶

Another way to get a single trace is to use the API.get_llm_trace_by_name() method.

You can get a trace name in two ways:

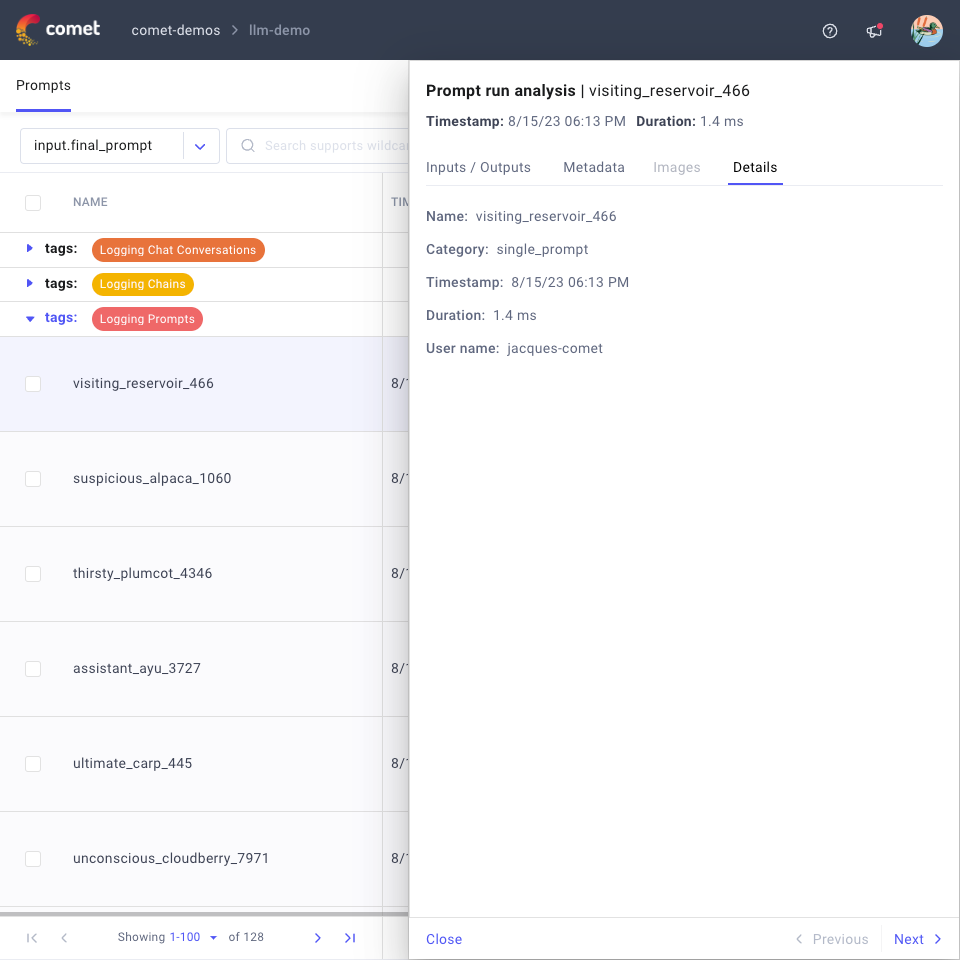

From the Prompts table in the Comet UI, select any prompt or chain and look at the Details tab.

For example:

The name of an example prompt/chain selected in the Prompts table The name of the selected prompt in the Prompts table is "visiting_reservoir_466". You can use this name to programmatically get a trace as follows:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15

import comet_llm # Initialize the CometLLM SDK comet_llm.init() # Create a Comet LLM API object llm_api = comet_llm.API() # Fetch a trace by key trace_name = "visiting_reservoir_466" trace = llm_api.get_llm_trace_by_name( trace_name=trace_name, project_name="llm-demo", workspace="comet-demos", )Get a trace by key, and then use the

LLMTraceAPI.get_name()method.

Search traces based on a query¶

If you would like to find a set of traces or find a specify trace but don't have access to the key or the name, you can search across all logged prompts and chains in an LLM project based on the attributes stored in the Metadata and Details tabs of the Prompts page.

A query is defined as one or more conditions in the format:

where:

- trace_variable is one of the supported search attributes such as Duration() or TraceMetadata().

- operator is a logical operator such as == or >.

- value is the string or integer value that the trace_variable needs to match.

For example:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 | |

Please refer to API.query() for more details.

Export LLM Prompts metadata¶

Once you have obtained one or more LLMTraceAPI objects using one of the methods described above, you can use:

LLMTraceAPI.get_metadata()to access and export the information associated to the logged prompt or chain.LLMTraceAPI.log_user_feedback()andLLMTraceAPI.log_metadata()to update the information associated to the logged prompt or chain.

Please refer to the linked references for more details on each method.